Goal setting is a critical step in the performance testing process since it defines what's 'good' or 'acceptable'.

What's the Goal Setting Process?

There are three steps in defining performance test goals.

The first step is determining the right metrics to track. That is, the kinds of performance you’re interested in measuring, and the quantitative metrics to measure it. Start with a few key metrics highly relevant to your application and focus on optimizing those before moving on to a larger list of metrics.

Some of the most common performance metrics include:

- Response times measure how long it takes an application to respond to a request.

- Resource utilization measures the CPU and memory usage for a given application.

- Throughput measures transactions per second under a given load condition.

- Workload measures the number of concurrent tasks or users.

The second step is defining the minimum thresholds for each metric. And coming up with effective test cases to measure reliability, capacity, regressions, and other elements for each metric. For example, a service level agreement (SLA) may specify a minimum performance threshold that must be met under a contract and you may need to estimate a viable load to test it.

Finally, the third step is running these tests to come up with baseline measurements. These figures are necessary to determine if any code changes create performance improvements or introduce regressions. After running initial performance tests, the team can look at long-term trends to see how performance has evolved over time.

The performance goals defined during this process are usually written as part of the workload specification and should be reviewed with all relevant stakeholders.

Meet with Relevant Team Members

Developers and test engineers might know the code base really well, but they usually don't communicate with users. When coming up with performance goals, it's important to gather input from both technical and business teams to come up with ballpark figures. It works out better than taking a guess or relying on past data analytics to make predictions.

Here are a few scenarios of why this would be:

- Marketers have an upcoming campaign that will significantly increase the number of users over the near-term, which is impossible to predict by looking at historical data.

- Lawyers know specific service level agreement (SLA) requirements that should define minimum performance levels under any load conditions.

- Stakeholders may have specific ideas about what elements of performance they want to test based on their conversations with potential customers.

The best way to avoid miscommunication is to ask the direct recipient of the testing results who else will be approving. Meet with these additional stakeholders and make sure their expectations are taken into account in the list of performance test goals. That way, there's less risk of failing to meet expectations.

Collect & Analyze Real Usage Data

Most performance tests focus on common user workflow. For example, every ecommerce application should load test a checkout process. It's easy to assume that users follow a certain workflow, but the best way to ensure you're testing the right things is to look at usage analytics, such as Google Analytics or other data.

Some important questions to ask include:

- What is the sequence of pages that a user follows?

E.g. A product page, shopping cart, payment info, shipping info and order confirmation page might be an ecommerce workflow for a standard checkout process.

- What is the think time associated with each action?

E.g. Users might wait 30 seconds before adding a product to a shopping cart, which influences the performance of the application under certain loads.

- What are the most popular workflows?

E.g. Do most users go through a common set of actions more than others?

In addition to workflow, server analytics can tell you what processes use the most system resources. Even if they don't receive a high proportion of traffic, these workflows may be important to test since they consume an inordinate amount of resources per request. Processes that involve higher error rates may also be worth performance testing.

Simplify Load Testing Processes

Most performance tests are protocol-based, which means they send a request and measure how long it takes to receive a response. Unfortunately, these tests don't measure how long it takes to actually render the HTML, execute the JavaScript, or other factors that real browsers experience. These measurements require browser-based performance testing platforms that spin up a browser to gauge performance.

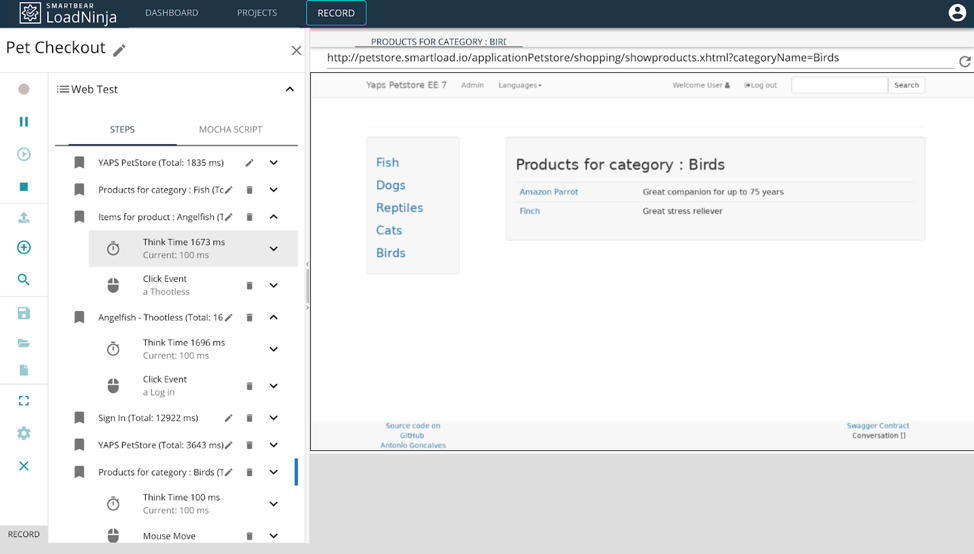

LoadNinja’s Record and Playback Functionality – Source: LoadNinja

LoadNinja, on the other hand, provides a scalable, browser-based load testing solution that makes it easy to get all of the metrics and data you need. In addition, the record and playback capabilities mean you can create load tests by going through user actions rather than coding complex scripts and dealing with problems like dynamic correlation. Test engineers don’t have to spend hours creating and maintaining brittle test scripts.

Finally, LoadNinja makes it easy to incorporate load tests into your continuous integration processes and send reporting directly to Zephyr for Jira or other test management platforms. That way, stakeholders can easily access the data and insights, while developers can diagnose and fix any performance bottlenecks. It’s an easy way to keep everyone on the same page throughout the performance testing process.

Sign up for a free LoadNinja trial

See how easy it is to get started with load testing today!

The Bottom Line

Goal setting is an important part of the performance testing process — it's impossible to define what's 'good' or 'acceptable' without it. By involving both business and technical teams in the goal-setting process, you can ensure you're measuring everything that matters and the application remains performant for users.

LoadNinja simplifies the load testing process. It has an instant record-and-playback functionality and integrates with common continuous integration platforms and other tools. Plus it provides a wider range of browser-based metrics that let you know you're hitting your performance goals.

Start your free trial

Learn how easy it is to get started today!