Load testing may seem straightforward, but there are several common mistakes that can lead to inaccurate results.

What is Load Testing?

Suppose your team has created a software product with extensive unit and functional tests. While these tests prevent errors, they don't test real-life application performance like load tests can. An N+1 database query could easily pass unit and functional tests, but cause severely degraded performance in real-life conditions.

Load tests enable you to measure response times, throughput rates, and resource utilization levels to identify these easy-to-miss bottlenecks before deploying to production. In addition, stress tests (a close cousin to load tests) can deliberately induce failure, help you analyze risks, and tweak the code to help the application break more gracefully.

There are four steps to the load testing process:

- Gather Requirements – Determine the most critical functions that require testing. Such as the checkout process for an e-commerce website.

- Map Out Workflows – Identify how users interact with the application by looking at APM tools. For instance, what are the steps of the checkout process?

- Establish a Baseline – Run initial tests to establish a baseline to test against. Future tests can be compared against the baseline to pinpoint problems.

- Automate & Integrate – Make load testing part of the continuous integration and development (CI/CD) process and incorporate it with the testing tool you already use.

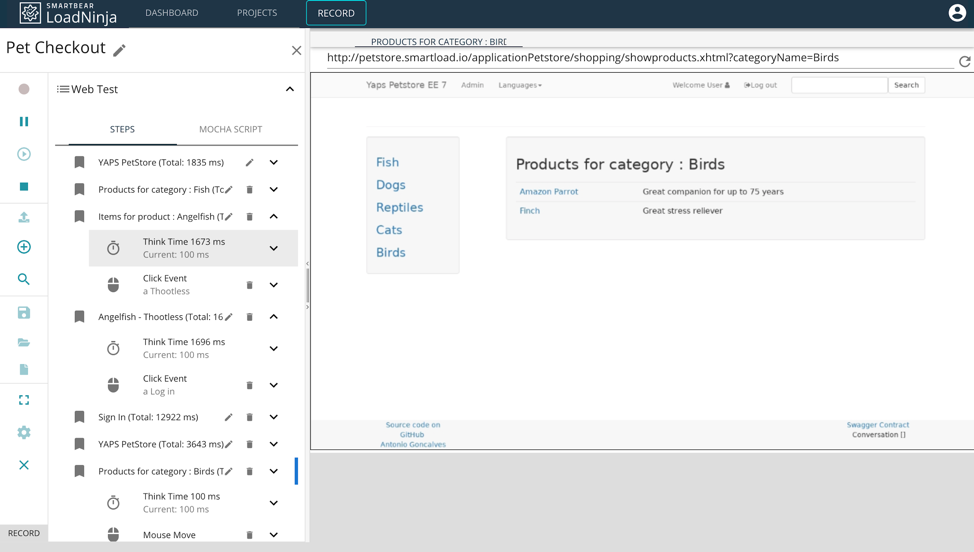

LoadNinja makes real-browser load testing simple with intuitive record and playback functionality and DOM-based diagnostic tools. After generating test scripts, you can easily integrate them into numerous continuous integration environments, such as Jenkins, and project management solutions, such as Zephyr for JIRA.

LoadNinja’s Record and Playback Functionality – Source: LoadNinja

Sign Up for a Free Trial

See how easy it is to get started

Mistake #1: Overlooking User Think Time

Most load tests are protocol-based tests – such as JMeter – that simply make requests to a server and measure the response. The problem is that these tests don't account for HTML rendering or JavaScript execution times, which can be significant in modern JavaScript-heavy web applications. So the results may not be representative of the true user experience.

Browser-based tests – such as LoadNinja – spin up real cloud-based browser instances and replay test scripts that mimic actual users. When troubleshooting bottlenecks, developers can dig into specific virtual-user sessions (and the browser DOM) to find issues, rather than rely on high-level performance statistics.

But a common mistake with browser-based tests is failing to account for user think time. When you're going through the motions, it's easy to click around much faster than an actual user would when using the application. It's better to carefully account for user think time by taking the appropriate time between each step to get the most accurate results.

LoadNinja lets you incorporate user think time when recording a session or define think time for each step after the fact. That way, you can easily adapt your tests to account for real-life users and ensure the most accurate results.

Mistake #2: Using Hardcoded Data in Requests

Many performance engineers create scripts with hard-coded parameter values. For instance, a test script for an ecommerce shop may click on the same product and go through the checkout process in tens of thousands of instances to gauge performance. The problem is that one product page may not perform the same as every other product page on the website.

You don’t need to include variable parameters in every situation, but it’s important to think through situations where the performance may differ. Focus on those areas.

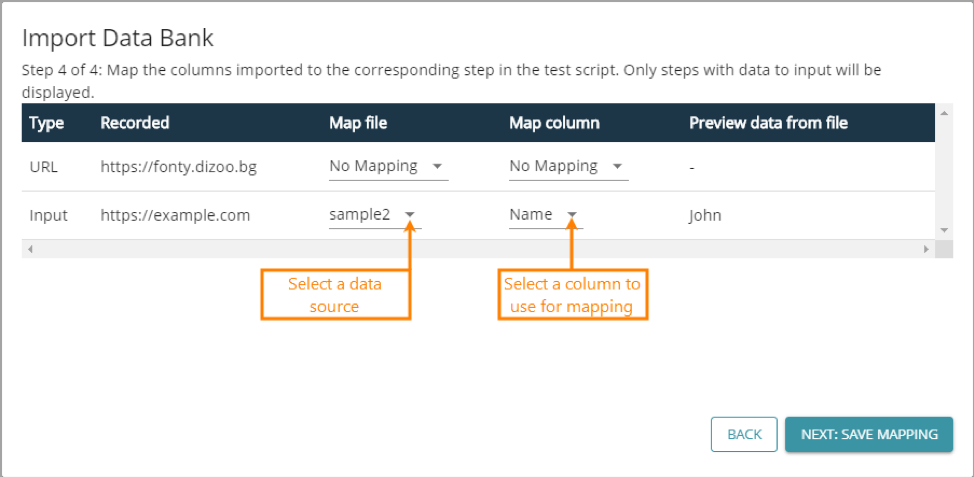

LoadNinja makes it easy to incorporate variables using databanks, or CSV or TXT files, containing different input values. Data-driven load tests run test commands for each row in the databank. It creates a more robust test suite, which then accounts for a wider range of possibilities. Best of all, you don't need to re-record the scenario multiple times.

Example of the Databank Input Process – Source: LoadNinja

Mistake #3: Focusing Too Much on Response Times

Response times are the most obvious load test metric, but they aren't the only thing that matters. For example, fast response times aren't very relevant if an application has a high error rate. Effective performance tests measure many different metrics to get a holistic view of how an application behaves under different load conditions.

Some of the most important metrics include:

- Average Response Times – The average amount of time that passes between a client's initial quest and the last byte of a server's response.

- Peak Response Times – The roundtrip time from request to response with a focus on the longest cycle rather than the average cycle. Which is helpful for finding anomalies.

- Error Rates – The percentage of error-generating requests compared to total requests. While some errors are common, it's best to minimize this percentage.

- Requests Per Second – The raw number of requests sent to the server each second, regardless of the number of users.

- Concurrent Users – The number of virtual users that are active at a given point in time. Unlike requests per second, this tells you how many users you can support.

- Throughput – The amount of bandwidth consumed. Low numbers could suggest the need to compress resources, such as JavaScript, CSS or image assets.

It's equally important to help developers diagnose performance bottlenecks. After all, it's not helpful to tell a developer that the checkout process takes too long without telling them which step experienced issues and what occurred. It's better to show them exactly what happened.

LoadNinja makes it easy to track all of these metrics in an easy-to-use dashboard, as well as drill down into individual VU sessions to find the cause.

The Bottom Line

These mistakes are easy to make, but with tools like LoadNinja, even easier to avoid. It's simple to bring load tests in-house and incorporate them into your existing CI/CD process. The key is ensuring these tests are designed to truly mimic users and enable developers to find problems. LoadNinja gives you that ability and more.

Sign up for a free trial of LoadNinja

You’ll see how easy it is to perform fast and effective load tests.